#News

#News

Use of AI in scientific research may undermine the training of early-career researchers

Early reliance on AI tools may undermine learning, critical thinking and the authenticity of academic work, according to a researcher at Einstein

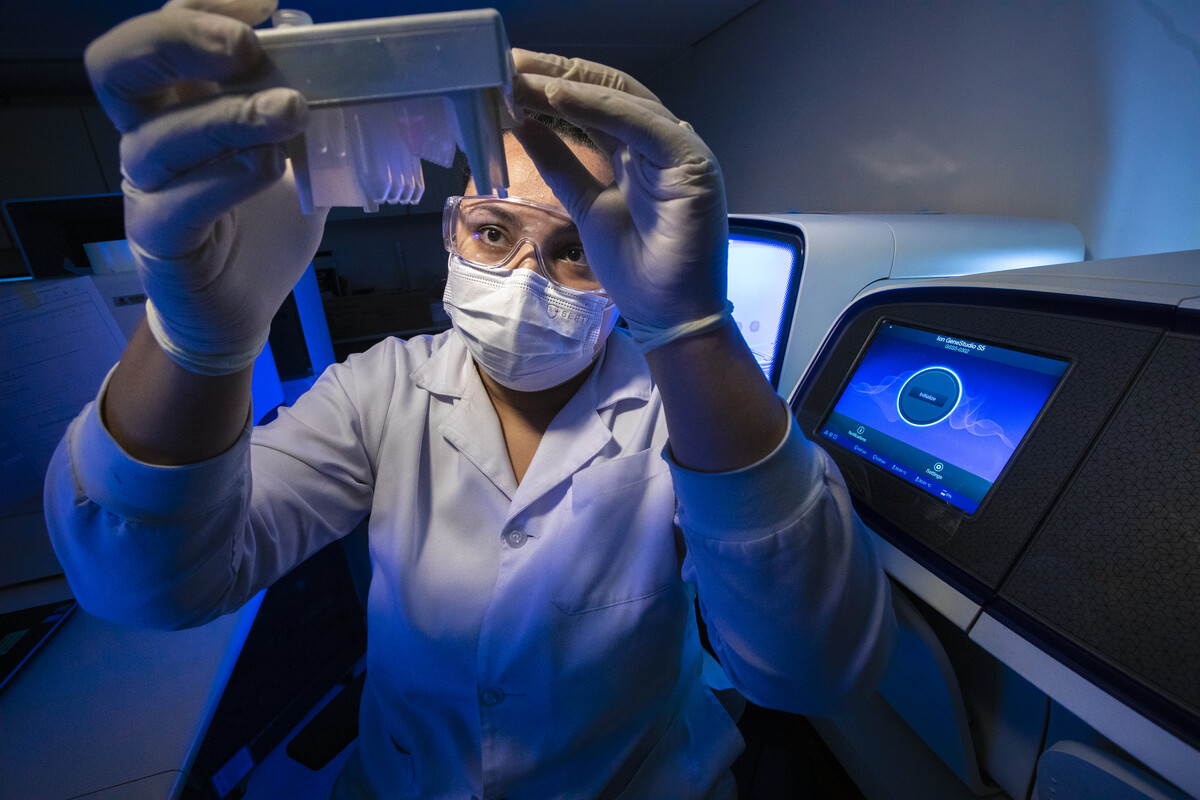

Early use of AI tools may undermine the development of critical thinking in researchers in training, according to a specialist at Einstein | Image

Early use of AI tools may undermine the development of critical thinking in researchers in training, according to a specialist at Einstein | Image

With increasing capacity to generate coherent, well-structured text, generative artificial intelligence (AI) is playing an ever-more significant role in the daily routines of researchers. While the technology has the potential to boost academic productivity, it also raises a question that continues to divide experts: what is the impact of intensive AI use on the training of early-career scientists?

A researcher’s daily routine involves a wide range of tasks—from writing papers to analyzing data. AI can support these activities, freeing up time for more intellectually demanding work. The problem, according to specialists, lies in how this support is integrated into the learning process.

The gap between generations of researchers makes this particularly clear: while many established PhDs developed their writing and analytical skills manually, doctoral students entering the field today have unrestricted access to AI platforms—and may never need to exercise these skills independently.

Watch the full interview with Helder Nakaya on Science Arena:

When AI undermines training

In an interview with Science Arena, biologist Helder Nakaya, a senior researcher at Einstein Hospital Israelita, outlines two distinct scenarios. In the first, the researcher already masters core competencies—knowing how to draft a paper, interpret data, structure an argument—and uses AI to boost productivity or refine results. In this case, the risks are limited.

In the second scenario, the problem becomes structural: when a researcher begins learning a skill with AI as a support tool from the very beginning.

“Studies have already shown that this does not activate the same areas of the brain in the same way as learning without the use of this ‘crutch,’” Nakaya says.

Nakaya also acknowledges the structural pressure pushing researchers toward intensive use of these tools: the growing demand for publications increases the need for writing and revision, making it difficult to forego any resource that streamlines the process.

The impact on manuscript quality

Beyond individual training, the growing role of AI in scientific production raises concerns about quality and differentiation among researchers. “The ease of generating polished texts, with perfect English, makes it difficult to distinguish researchers’ quality based on manuscripts submitted for publication,” Nakaya says.

The result, he argues, is a crisis of authenticity: when a text is not recognized as a genuine piece of work but rather as a product of AI. In such cases, the work loses value and prestige within the scientific community.

Authenticity as a differentiator

In this context, authenticity may become an increasingly valuable asset in scientific careers. For Nakaya, it serves as a signal of credibility: the recognition of human effort, genuine curiosity, and the ability to formulate original questions—qualities that AI, for now, cannot replicate.

To learn more about the use of artificial intelligence in scientific careers, see the full interview with Helder Nakaya on Science Arena.

*

This article may be republished online under the CC-BY-NC-ND Creative Commons license.

The text must not be edited and the author(s) and source (Science Arena) must be credited.